TechLetter #2 - future of federated privacy, uneven approach to GDPR compliance

Welcome to the second letter!

As expected, the form of the letter evolves. I realised that section “Other” may be handy for stuff that is difficult to classify.

Security

1)

Sad DNS attack means that the old times of cache poison attacks are new again. This technique would allow attackers to fool the domain name servers about the IP address of the website or service the user wants to access or use. Fraudulent IP-name mapping could lead to abuse.

we find over 34% of the open resolver population on the Internet are vulnerable (and in particular 85% of the popular DNS services including Google’s 8.8.8.8).

Not good news:

In addition to Linux, we have verified that other major OS kernels are vulnerable as well, albeit with lower global rate limit — 200 in Windows and FreeBSD, and 250 in MacOS. It is concerning that not a single OS is aware of the side channel potential of global rate limits

Nice accessible explanation here:

Rate limiting seems innocuous until you remember one of the core rules of data security: don’t let private information influence publicly measurable metrics

Precisely.

2)

The UK announced the creation of their national cyber force listing some of the types of cyber operations within their reach. We’ll probably hear more soon. The actual cybersecurity doctrine remains classified.

Privacy

1)

The next (and the following) will be the years of federated learning, a technology that may make it possible to scale certain uses of Machine Learning in privacy-preserving ways. It may allow to analyse/use data without collecting it in raw. The thinking here goes that the less of user data is held, the better for privacy. Apple is somewhat looking in use of such things. Google is exploring it and even wants to implement this in a web browser as a privacy-preserving ads targeting tech. Much indicates that the federated learning ship has taken off (“analyzing data without ever actually exposing it. It sounds impossible, but there are computational techniques for allowing data to be manipulated without the user ever actually having access to any of it”). The big question is if this kind of technology is (at least currently) limited to big and resourced tech players. I recently analysed the challenges in the following years if one wants to deploy advertisement systems with privacy in mind. Not simple to do and required broad ecosystem changes.

2)

Interesting input from the UK’s Competition Market Authority report:

Google told us that it only sends imprecise location data to third parties to reflect the principles of data minimisation and proportionality required by GDPR, whereas the same concerns do not arise for disclosures to DV360 [edit - a Google service] as no third-party data sharing occurs.

This would imply that the data used “outside” (made available to the external parties) is privacy-proofed, but the one used internally was not.

"A smaller intermediary has no choice but to comply with data protection regulations because all its partners demand it; it cannot afford to be a target of regulators or run the risk of partners ceasing to do business with it. On the other hand, Facebook and Google can take more risk as they can afford to fight regulators if and when they are made the subject of enforcement action".

If I read this right, it may suggest that big players can take bets with what GDPR requires or not - and to do as they please (?).

All this is important due to the ongoing changes in web architecture that promotes privacy on the one hand and demands some changes in how web browsers work on the other. Navigating this at the many companies touched by this is both fascinating and challenging.

3)

My privacy analysis of a mechanism letting websites to discover what apps are installed on a smartphone.

In the real world new web features often end up being abused. These are facts. We should consider the risks diligently. For example, some concerns exist over the risk of creating conglomerates of websites and apps determined to track the users. This would simply include abusive websites and app makers joining forces to track the user and configure their apps to point to a specific website. Such websites would then be able to use the fact of the app being installed/uninstalled as a signal. Like a fingerprint. This may be a more complex problem than just privacy (but is seen as harmful by Firefox). It may also include anti-competition and consumer protection issues.

It’s already deployed on Chrome under Android.

Tech policy

1)

Europe remains at the forefront of technology regulations. Rumours abound of a desire to consider a prohibition of targeted ads in the upcoming European Democracy Action Plan. That would mean that the regulation would be clearly linked not only to technology, web economy but also cybersecurity and privacy. It will be interesting to see how the regulation looks in the end - and what the rest of the world would do about it.

2)

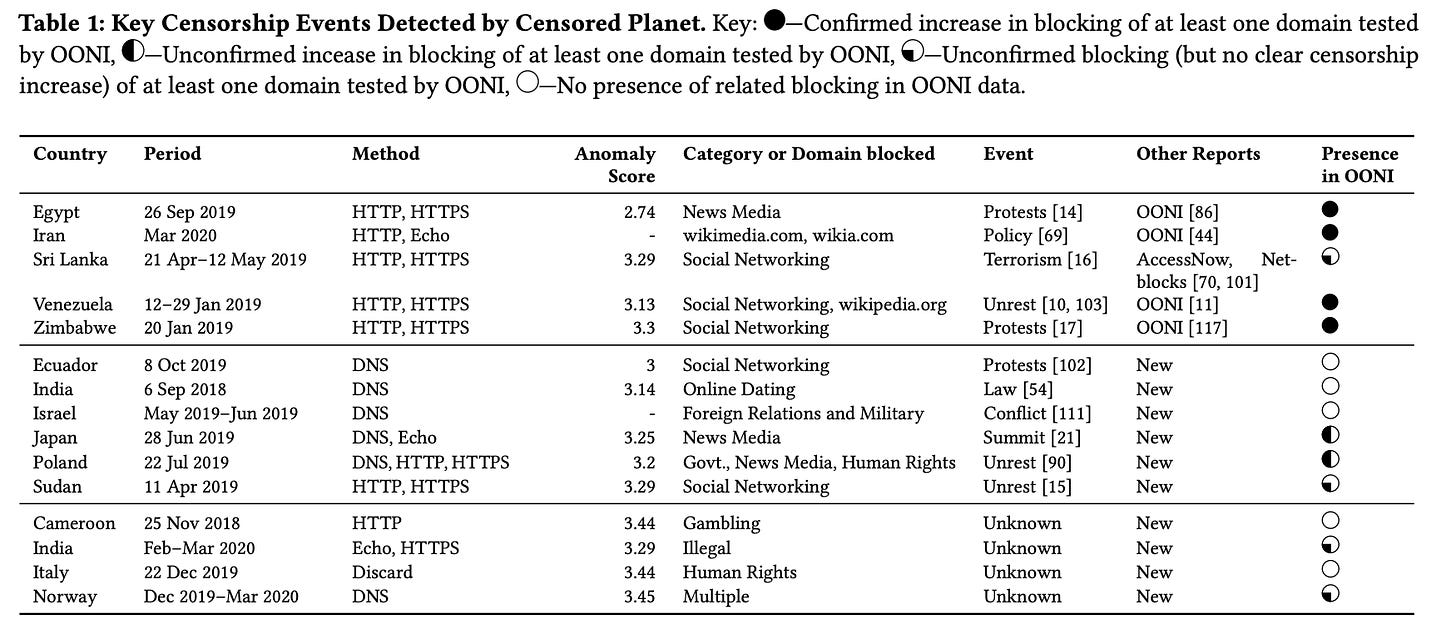

Interesting research claiming to have uncovered previously unknown instances of technical censorship measures deployed in some countries.

It considers any interferences, of course, so including lawful takedowns in Norway or the events following terrorist attacks on Sri Lanka.

Other

Interesting primer on decentralised computing, the role of which will only increase (and the paradigm important for many privacy-preserving schemes).

That’s it this time, thanks!

In case you decide to forward this letter further for any reason, I’ll leave this thingy below: